Solving difficult scientific or engineering problems has proven itself to be the greatest benefactor of long-term growth and development. However, finding support for fundamental technological developments has come increasingly under fire in recent years.

From “Amusing Ourselves to Death” By Neil Postman, a book about the possibility that Aldous Huxley, not Orwell, was right.

It is not just crying wolf, and we have all heard this message before, funding for science is low, the space program takes cuts, fewer technical majors, Justin Bieber is more popular than The Doors.

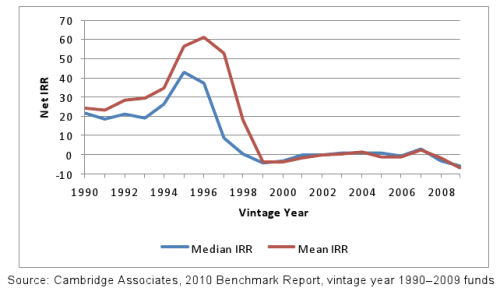

A fantastic metric to determine whether our resources, in sum, are being allocated fruitfully is to look at pooled returns of venture fund indexes. Starting with its birth in the 1960s, to the 1990s. Venture capital had excellent returns, and it often closely associated with the high-capital, slow-growth, semiconductor and biotechnology industries.

VC funds have posted negative mean and median returns, starting in 1999 through the present. A small fraction of firms are the exception.

In the new millennium however, we have encountered a new paradigm for returns amongst these indexes, a shift from funding transformational technologies to supporting companies solving incremental, or “hype” based problems. A shift from long-term garden like growth, to one equivalent to big game hunting. Steve Blank, who is invested in Ayasdi, said it best recently, stating:

If investors have a choice of investing in a blockbuster cancer drug that will pay them nothing for fifteen years or a social media application that can go big in a few years, which do you think they’re going to pick? If you’re a VC firm, you’re phasing out your life science division.

This perspective is beyond the bubble argument, or the oscillations of markets. It marks the creeping penetration of triviality into our investment culture. Furthermore, it is not a decision by any individual, rather the whole return of investment ecosystem has created an illusion highlighting consumer, social, and entertainment products.

Venture is often associated with bravely expanding our horizons, to seek out new lands, and bring back riches that will ensure growth for generations to come. Where will we go after all the shoe stores, and match-makers have migrated online? Once the saturation of social media has reached nauseating ubiquity? To truly create long-term returns, that assure the future financial stability of the investor, scientist/engineer, and society we must lead, not follow the bandwagon, or be part of the “me too” culture.

Citations:

“Cambridge Associates LLC U.S. Venture Capital Index® And Selected Benchmark Statistics” 2011

“What Happened To The Future” – FoundersFund Manifesto